Why do people support their past ideas, even when presented with evidence that they're wrong?

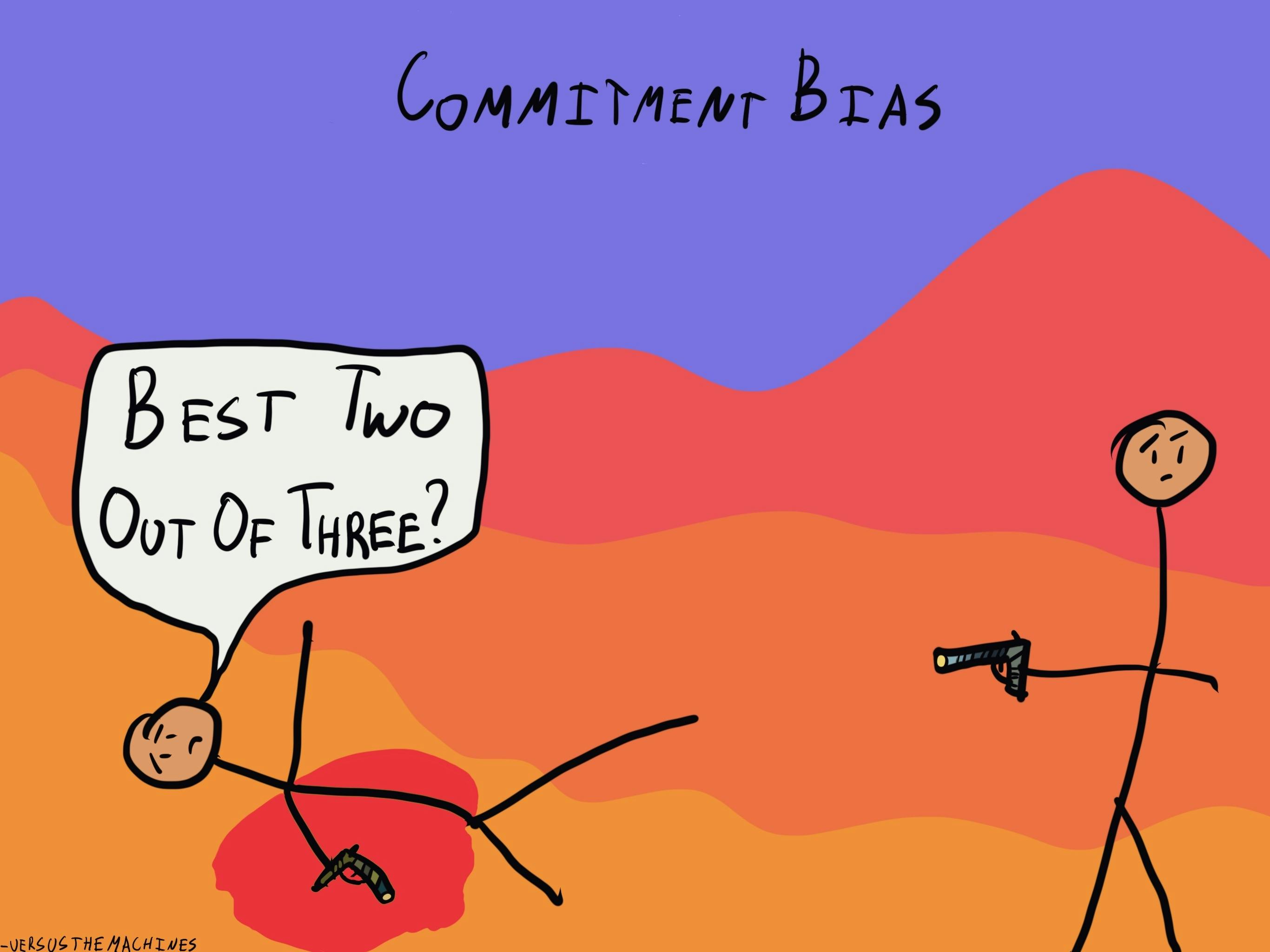

Commitment Bias

, explained.What is the Commitment Bias?

Commitment bias, also known as the escalation of commitment, describes our tendency to remain committed to our past behaviors, particularly those exhibited publicly, even if they do not have desirable outcomes.

Where this bias occurs

Imagine you’re wrapping up your first year of university, majoring in anatomy and cell biology. Your goal has always been to attend medical school and become a doctor, so it came as no surprise to anyone when this was the path you chose.

During your first semester, you enrolled in an elective course about the history of modern Europe. While you didn’t necessarily dislike your core anatomy classes, you found yourself deeply engaged in the history elective that you took on a whim. You enjoyed it so much, in fact, that you decided to take a couple of other history courses in your second semester and dedicated your free time to researching the concepts discussed in class. Throughout the year, a voice in the back of your mind has been pushing you to change your major and to get a Bachelor of Arts in history. However, this decision goes against your long-term goals and everything you’ve ever said about yourself. There’s nothing wrong with changing your mind, yet you feel pressured to keep things consistent. Your hesitation to change your major, even though it’s what you truly want to do, is the result of commitment bias.