The Hidden Power of Intellectual Humility

The 2008 financial crisis was undoubtedly a convoluted event in history. Despite its complexity, in its aftermath, many economists aimed to provide bulletproof explanations for what went wrong. Russ Roberts, a notable economist, contributed his own explanation through an essay and subsequent book, both titled Gambling With Other People’s Money. Yet he later revised his essay, admitting that while he still believed in his own theory, he is now more open to other explanations, and is continually learning more about issues that he was supposed to be an expert on.1

In an interview, when asked why he changed his mind, Roberts said: “I became deeply aware of my ignorance. I had no idea how the housing market worked … I’ve become more epistemologically humble, which is a fancy word for saying ‘I don’t know.'”2

This one statement highlights a subject of interest in science today: intellectual humility, or “considering that one might be wrong.” Turns out, there is a surprising amount of benefit to this taking approach.

Behavioral Science, Democratized

We make 35,000 decisions each day, often in environments that aren’t conducive to making sound choices.

At TDL, we work with organizations in the public and private sectors—from new startups, to governments, to established players like the Gates Foundation—to debias decision-making and create better outcomes for everyone.

The science behind “we don’t know what we don’t know”

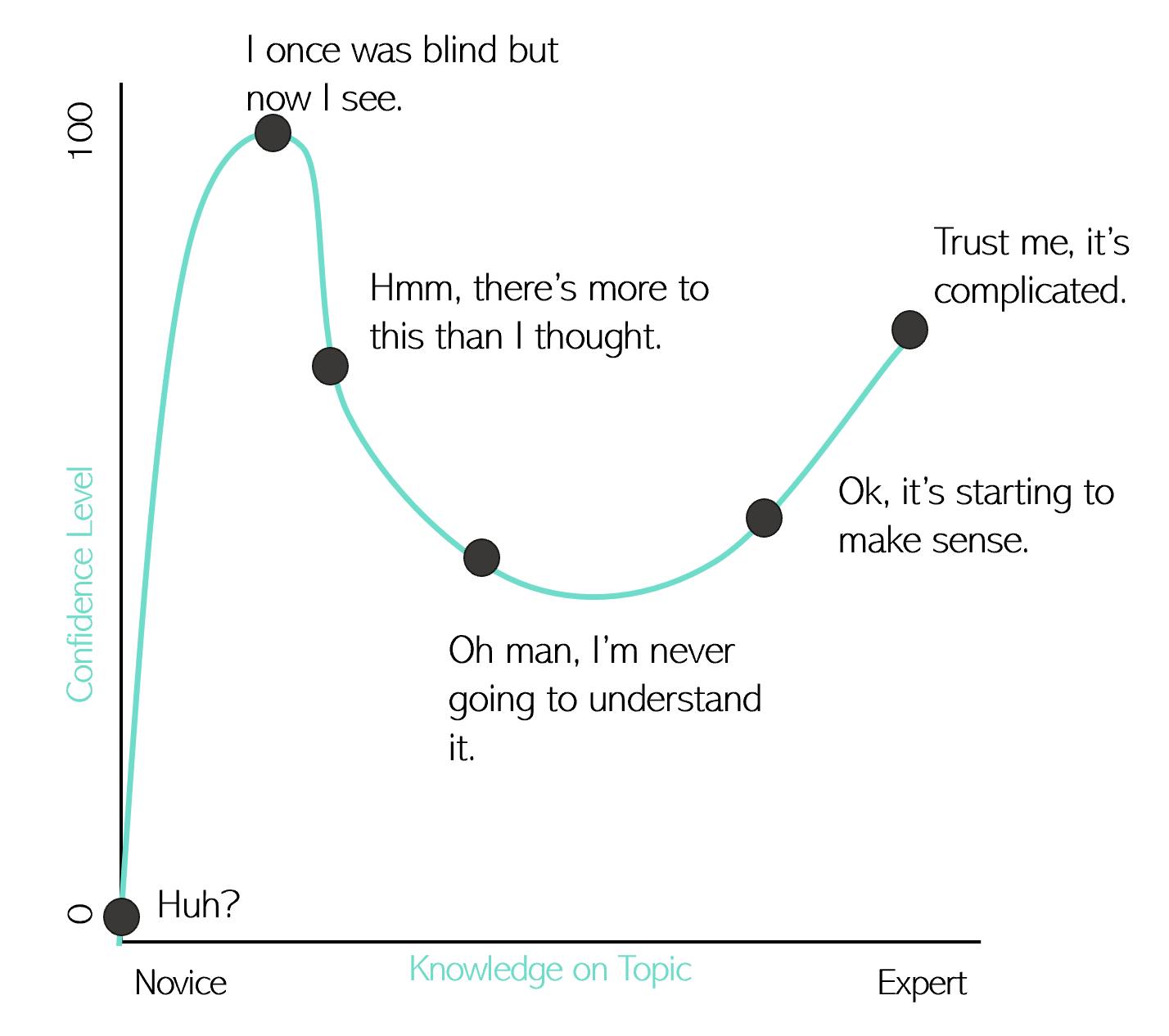

We are cognitively wired to think we are right more often than we think we are wrong. The Dunning–Kruger effect, a well-known cognitive bias, causes us to overestimate our knowledge or ability in any given area. The researchers Dunning and Kruger discovered this effect by testing study participants on tasks including humor, logic, and grammar, and subsequently asking participants to rate their own ability. Those who scored lower on the tasks overestimated their ability significantly: on average, they predicted they would rank in the 62nd percentile, when in fact they were in the 12th.3

Essentially, the less we know about something, the less we realize we know nothing about it. In contrast, the more we know about something, the more aware we are of all the things we don’t know about it. It’s a unique effect that is both frustrating and enlightening: it shows that time and effort may not just improve our ability, but our realization of our lack of ability.

Adapted from: Squad. (2018, December 13). Dunning-Kruger Effect: Definition, Test, Examples & Quiz. Science Terms. https://scienceterms.net/psychology/dunning-kruger-effect/

Why does it happen? First, it’s more difficult to realize we are bad at something when we don’t understand it well. Think of a newbie learning proper form at the gym, or someone with poor grammar trying to write a book. Getting better takes practice, and with that comes the realization of weak points. In addition, we are also cognitively wired to take mental shortcuts, which means our brains avoid spending time thinking about our abilities in any given topic. Yet, with increased reflection on how we learn, practice, and perform, we can improve.

But it’s not only novices who are prone to overconfidence. Daniel Kahneman and Amos Tversky found that even trained statisticians overestimate their ability to use proper sample sizes in their work. In a famous study on the so-called “law of small numbers,” they gave statisticians realistic research proposals and asked them to choose sample sizes, estimate the chance of success or failure, and give advice to a fictional grad student. The majority of the respondents made errors in their work.4 Evidently, even experts fall victim to overestimating their abilities.

One might think that this occurrence doesn’t happen in science. The concept of questioning existing beliefs is at the center of the scientific method, which guides researchers as they look to prove or disprove theories. Yet, according to Julia Rohrer, self-correction isn’t as embedded in the culture of science as we may think.

Rohrer, working with other scientists from the US and Europe, founded the Loss-of-Confidence Project last year. The group invited psychology researchers to submit statements about times they had lost confidence in their previous work, and why. They found that although many scientists privately disclosed a lack of confidence in their findings, those views never become public, which is detrimental to the advancement of science.6 The Loss-of-Confidence Project shows that, as scientists, we need to improve our ability to admit our downfalls.

More often than we’d like, situations arise in which the “right” answer does not exist. Think back to the viral sensation of “The Dress,” which threw the world into an argument over whether or not the colors of the dress were black and blue, or white and gold.

Source: The ACTUAL colour of The Dress revealed. (2015, February 27). The Independent. http://www.independent.co.uk/life-style/fashion/news/the-dress-actual-colour-brand-and-price-details-revealed-10074686.html

This viral photo highlights how an ultimate truth may not exist in some cases.6 Yet we still avoid admitting we might be wrong—especially when results and opinions matter. This desire to be correct contributes to our overestimation of our abilities, and lead us to make potentially dangerous mistakes.

However, research shows that intellectual humility may help us overcome this.

Intellectual humility: how admitting our downfalls makes our work better

In essence, intellectual humility is recognizing the possibility that we might be wrong. It is a mode of thinking in which we see our limitations and are more open to the experiences and feedback of others.8 Those displaying intellectual humility are generally more curious, driven to pursue knowledge, and receptive to feedback.9

Intellectual humility improves memory and mastery of techniques

Researchers from the University of California, Davis, and the University of Pittsburgh examined how intellectual humility helps individuals seek out challenges and persist through setbacks. They found, across several studies, that intellectual humility boosts our ability to learn, and that those who were encouraged to be more intellectually humble put more effort in learning about a topic which they failed initially to master.9 In addition, improving intellectual humility improved participants’ memory for the information they read, and increased their awareness of their own knowledge.10

Intellectual humility encourages open-mindedness

Besides improving task mastery and memory, intellectual humility also helps us be more open to other viewpoints. In a time of polarization, debate, and disagreement, we are continually provided with information that goes against our opinions or what we hope is true, but we don’t necessarily pay attention to it. Confirmation bias is our tendency to remember and interpret information in a way that is favorable to our pre-existing beliefs. It can get in the way of innovation and success, and can even harm relationships.10

Individuals displaying more intellectual humility are less susceptible to this bias. A study found that those with more intellectual humility spent more time reading sentences expressing differing opinions than their own, compared to those with lower intellectual humility. In addition, intellectual humility boosts open-mindedness and reduces social vigilance, which helps with collaboration.9 By recognizing and embracing the possibility that we might be incorrect about our beliefs, we become more willing to learn about alternative perspectives and make better decisions because of it.

Intellectual humility boosts innovation

Intellectual humility can also help us innovate, by making us more open-minded. This is because the ability to make connections with individuals who are different than ourselves, and a willingness to learn about other cultures, helps spur creative thinking. A large study by several experts from the United States and Europe found that individuals who actively exposed themselves to new cultures were more productive and more likely to become an entrepreneur.11 Take, for example, Mark Zuckerberg and Steve Jobs. Jobs was an avid supporter of “going East,” and regularly visited ashrams in India to gain clarity when he needed to make difficult decisions. He advised Zuckerberg, who at the time was struggling to position Facebook, to do the same. This had profound benefits for the future direction of Facebook: while he was travelling, Zuckerberg witnessed the close connections people had with one another, and the experience affirmed his sense of Facebook’s mission.12 By helping us remain open-minded, intellectual humility doesn’t just help us become more tolerant of opposing views, but also helps us remain open to experiences that allow innovation and productivity to flourish.

Intellectual humility and certainty

Despite the benefits of intellectual humility, those who have it still have their share of convictions. One study found that those with more intellectual humility were found to have more strongly held beliefs.12 The reason was that these individuals have more information available to support their strong beliefs—due to their tendency to question their own beliefs and ask questions of the other side.13

This level of confidence is often useful, as there are moments that require certainty in our answers. Careers in science, consulting, and other fields require employees to have high confidence in their abilities. Intellectual humility forces us to recognize our downfalls and question them to provide more certainty. But, it is important to be cognizant of our convictions. Michael Lynch, a philosophy professor, states that “It’s bad to think of problems like this like a Rubik’s cube: a puzzle that has a neat and satisfying solution that you can put on your desk.”7 Although certainty is desired in many fields, in many cases it isn’t achievable and may do more damage in the pursuit.

The downside of intellectual humility

While intellectual humility is a fundamental tool for improving memory, learning, and open-mindedness, it comes with its downsides. By definition, intellectual humility does not necessarily suggest a lack of confidence in one’s ability. However, humility exists in both “appreciative and self-abasing” forms.14 Some humble individuals have experienced success, and are more confident in their abilities while still being open to feedback. But for others, humility arises after experiencing consistent failure, causing individuals to underestimate their ability and concede to others in order to avoid negative feedback.14 Therefore, it is important to recognize your behavioral “blind spots” without becoming self-effacing or losing faith in yourself.

Using intellectual humility to your advantage

It’s difficult to be wrong. Sometimes it’s difficult to even admit the possibility of it. Yet doing so can help improve how we learn and how we work, and make us more agreeable to others around us. In an age where we are constantly bombarded with information, asked to deliver results, and left to navigate a polarized and political world, we can use intellectual humility to ensure we make better decisions.

Here are some takeaways on cultivating intellectual humility, in life and at work:

- Ask more questions of those different than you. Josef Pieper, a German philosopher, suggests that “the natural habitat of truth is conversation.”2 Engaging in dialogue and conversation with diverse opinions is the key to unlocking intellectual humility. And do so with a touch of empathy.13 Taking time to understand others and why they may disagree will help bolster your understanding of issues and your work.

- Celebrate failure: Before we can learn from our mistakes, we have to make them. But few of us want to be the only person in the room who admits being wrong—even if there are benefits for learning and doing better work. Leaders should focus on building a culture that embraces failure, to inspire better discussion and better results. The culture of science also needs to focus on building a community of scientists that aim to prove themselves wrong.

- Remember, it’s not all about you: It’s hard to admit when we are wrong, but it may not do the damage we think it does. Those who admit they are wrong are typically not viewed as incompetent, and instead are seen as more friendly and open-minded.9,16

Do you want to live in a delusion of what you think is true, or do you want to live in the reality of what is true?

- Neil deGrasse Tyson

Certainly, this year has been one to prove us wrong. Travel plans have changed, education has moved online, companies have pivoted, leaders have changed their policies. I certainly was wrong about most of my predictions for what 2020 would hold. However, research shows that embracing our errors and admitting our potential to be incorrect can in fact strengthen us. So, just as Russ Roberts admitted his doubts about the 2008 financial crisis, we can embrace uncertainty about today’s era and work together to create a better outcome.

References

- Roberts, R. (2010, April 27). Gambling with Other People’s Money. Mercatus Center. https://www.mercatus.org/publications/financial-markets/gambling-other-peoples-money

- Historically Thinking. (2019, February 20). Episode 99: Russ Roberts on the 2008 Financial Crisis, Changing Your Mind, and Intellectual Humility | Historically Thinking. Retrieved September 27, 2020, from https://historicallythinking.org/episode-99-russ-roberts-on-the-2008-financial-crisis-changing-your-mind-and-intellectual-humility/

- Squad. (2018, December 13). Dunning-Kruger Effect: Definition, Test, Examples & Quiz. Science Terms. https://scienceterms.net/psychology/dunning-kruger-effect/

- Kahneman, D. (2011). Thinking, fast and slow. Farrar, Straus and Giroux.

- Akbareian. (2015). The blue and black (or white and gold) dress: Actual colour, brand, and price details revealed | The Independent | The Independent. https://www.independent.co.uk/life-style/fashion/news/dress-actual-colour-brand-and-price-details-revealed-10074686.html

- Rohrer, J. M., Tierney, W., Uhlmann, E. L., DeBruine, L. M., Heyman, T., Jones, B. C., Schmukle, S. C., Silberzahn, R., Willén, R. M., Carlsson, R., Lucas, R. E., Strand, J. F., Vazire, S., Witt, J. K., Zentall, T. R., Chabris, C., & Yarkoni, T. (2018). Putting the Self in Self-Correction: Findings from the Loss-of-Confidence Project [Preprint]. PsyArXiv. https://doi.org/10.31234/osf.io/exmb2

- Resnick, B. (2019, January 4). Intellectual humility: The importance of knowing you might be wrong—Vox. https://www.vox.com/science-and-health/2019/1/4/17989224/intellectual-humility-explained-psychology-replication

- Porter, T., Schumann, K., Selmeczy, D., & Trzesniewski, K. (2020). Intellectual humility predicts mastery behaviors when learning. Learning and Individual Differences, 80, 101888. https://doi.org/10.1016/j.lindif.2020.101888

- Deffler, S. A., Leary, M. R., & Hoyle, R. H. (2016). Knowing what you know: Intellectual humility and judgments of recognition memory. Personality and Individual Differences, 96, 255–259. https://doi.org/10.1016/j.paid.2016.03.016

- Colombo, M., Strangmann, K., Houkes, L., Kostadinova, Z., & Brandt, M. J. (2020). Intellectually Humble, but Prejudiced People. A Paradox of Intellectual Virtue. Review of Philosophy and Psychology. https://doi.org/10.1007/s13164-020-00496-4

- Lu, J. G., Hafenbrack, A. C., Eastwick, P. W., Wang, D. J., Maddux, W. W., & Galinsky, A. D. (2017). “Going out” of the box: Close intercultural friendships and romantic relationships spark creativity, workplace innovation, and entrepreneurship. Journal of Applied Psychology, 102(7), 1091–1108. https://doi.org/10.1037/apl0000212

- Mochari, I. (2015, September 29). Steve Jobs’s Early Advice to Mark Zuckerberg: Go East. Inc.Com. https://www.inc.com/ilan-mochari/visit-india-creativity.html

- Nasser, F. (2019, April 12). The Power of Intellectual Humility | Farah Nasser | TEDxDonMills. https://www.youtube.com/watch?v=2vjXw_iFOMc

- Weidman, A. C., Cheng, J. T., & Tracy, J. L. (2018). The psychological structure of humility. Journal of Personality and Social Psychology, 114(1), 153–178. https://doi.org/10.1037/pspp0000112

- DeGrasse Tyson, N. (2020). Cognitive Bias | Neil deGrasse Tyson Teaches Scientific Thinking and Communication | MasterClass. https://www.masterclass.com/classes/neil-degrasse-tyson-teaches-scientific-thinking-and-communication/chapters/cognitive-bias#transcript

- Fetterman, A. K., Curtis, S., Carre, J., & Sassenberg, K. (2019). On the willingness to admit wrongness: Validation of a new measure and an exploration of its correlates. Personality and Individual Differences, 138, 193–202. https://doi.org/10.1016/j.paid.2018.10.002

About the Author

Kaylee Somerville

Kaylee is a research and teaching assistant at the University of Calgary in the areas of finance, entrepreneurship, and workplace harassment. Holding international experience in events, marketing, and consulting, Kaylee hopes to use behavioral research to help individuals at work. She is particularly interested in the topics of gender, leadership, and productivity. Kaylee completed her Bachelor of Commerce degree from the Haskayne School of Business at the University of Calgary.