Why do we favor our existing beliefs?

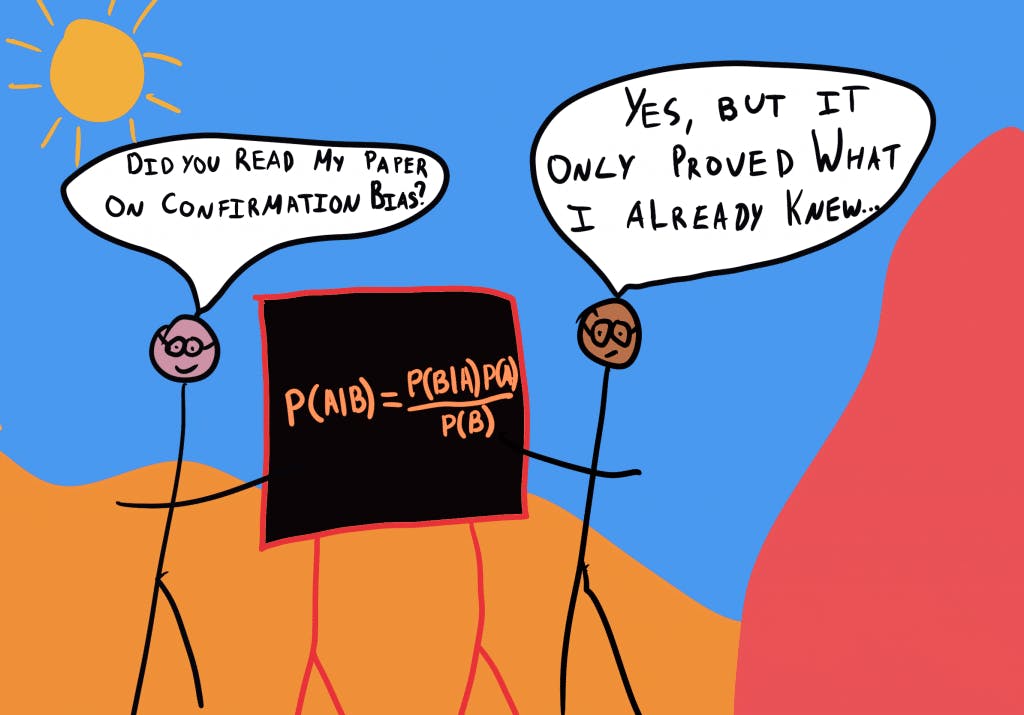

Confirmation Bias

, explained.What is confirmation bias?

The confirmation bias describes our underlying tendency to notice, focus on, and give greater credence to evidence that fits with our existing beliefs.

Where this bias occurs

Consider the following hypothetical situation: Jane is the manager of a local coffee shop. She is a firm believer in the motto, “hard work equals success.” The coffee shop, however, has seen a slump in sales over the past few months. Since Jane strongly believes that “hard work” is a means to success, she concludes that the dip in the coffee shop’s sales is because her staff is not working hard enough. To account for this, Jane puts several measures in place to ensure that her staff is working consistently. Consequently, she ends up spending more money by having a greater number of employees staffed on a shift, exceeding the shop’s budget and thus contributing to overall losses.

Consulting with other business owners in her area, Jane is able to identify her store’s new, less visible location as the primary cause of her sales slump. Her belief in hard work as the most important metric of success led her to mistakenly identify employees’ lack of effort as the reason for the store’s falling revenue while ignoring evidence that pointed to the true cause: the shop’s poor location. Jane has fallen victim to confirmation bias, which caused her to notice and give greater credence to evidence that fits with her pre-existing beliefs.

As this example illustrates, our personal beliefs can weigh us down when conflicting information is present. Not only does it stop us from finding a solution, but we also may not even be able to identify the problem to begin with.

Debias Your Organization

Most of us work & live in environments that aren’t optimized for solid decision-making. We work with organizations of all kinds to identify sources of cognitive bias & develop tailored solutions.

Individual effects

Confirmation bias can lead to poor decision-making as it distorts the reality from which we draw evidence. When observed under experimental conditions, assigned decision-makers have a tendency to actively seek and assign greater value to information that confirms their existing beliefs rather than evidence that entertains new ideas.

Confirmation bias can have implications for our interpersonal relationships. Specifically, how first impressions cause us to selectively attend to our peers’ subsequent behavior. Once we have an expectation about a person, we will try to reinforce this belief through our later interactions with them. In doing so we can appear “closed-minded” or, conversely, participate in relationships that do not serve us.

Systemic effects

Considering the bigger picture, confirmation bias can have troubling implications. Major social divides and stalled policy-making may begin with our tendency to favor information that confirms our existing beliefs and ignores evidence that does not. The more we become entrenched in our preconceptions, the greater influence confirmation bias has on our behavior and, consequently, the people we choose to surround ourselves with. We can trap ourselves in a sort-of echo-chamber, and without being challenged, the biased thoughts prevail. This can be especially concerning in terms of socio-political cooperation and unity amongst the population.

Confirmation bias can exacerbate social exclusion and tensions. In-group bias is the tendency to favor those with whom you identify, in doing so, assigning them positive characteristics. That same inclination is not present for the out-group, which consists of individuals who you feel you share less in common with. Combined with confirmation bias, there is a lot of opportunity for prejudgment and stereotyping. Confirmation bias may lead us to look for favorable traits in our in-group and avoid any of our shortcomings. It may also cause us to be wary of the out-group and interpret their behavior through the lens of what we already assume.

Confirmation bias is particularly present in the consumption of news and media. The ever-evolving ease of access has allowed the population to personally curate what they consume. While it is evident that people cling to sources that support their political orientation, confirmation bias can also influence how news is reported. Journalists and media outlets are not immune to bias, they too are selective with their sources, what they choose to present, and how that information is conveyed.2 Zooming out, these outlets and their leanings can have a strong influence on consumers’ knowledge, beliefs, and even voting patterns.

How it affects product

Marketing and reviews are where we can see the largest influence of confirmation bias as it pertains to products. Most consumers rely on product reviews and advertisements to advise them on the benefits of various items. For example, influencers and celebrities are a great way to promote products. This can expose new people to the brand and broaden the customer demographic. However, it is important to be careful about who you allow to be part of your promotional campaign. By using controversial figures to recommend your product, you may be damaging the brand’s reputation. If any of your clients think poorly of the individual you endorsed, their first impression of your company will be a negative one. This is confirmation bias at work: if we dislike a celebrity who endorses a product, we are more likely to attend to information that suggests that we will also dislike the product.

Consumers will often consult reviews before buying a product – this gives them a good idea of whether or not that item will be useful and valuable. Upon researching, if they are primed with an abundance of positive reviews, they may be likely to seek to confirm information when using it themselves.

Confirmation Bias and AI

When using artificial intelligence, we are in control of how we prompt the system. While these tools are meant to produce unbiased and objective information, the individual using them may steer the response in a direction that coincides with their preexisting beliefs. For example, if you are using AI software to research different political candidates, the manner in which you ask the question matters. Depending on the tool you use, “Why should I vote for X instead of Y” and “What are the strengths of X candidate and Y candidate” will turn up very different results. Depending on what we “want to hear,” we may unconsciously prompt the system to reinforce our initial thought pattern.

As mentioned, though we like to think of AI as unbiased, the reality may be a little murkier. Artificial intelligence uses large data sets to inform itself on various topics. Due to the size and comprehensiveness of these data sets, they may reflect the biases that are present in the world around us. While it may be harmless in certain situations, it can also perpetuate negative stereotypes, or push a certain narrative as a result of the data used to program it.

Why it happens

Confirmation bias is a cognitive shortcut we use when gathering and interpreting information. Evaluating evidence takes time and energy, and so our brain looks for shortcuts to make the process more efficient.

Confirmation bias is aided by several processes that all act on different stages to protect the individual from cognitive dissonance or the discomfort associated with the violation of one’s beliefs. These processes include:

- Selective exposure, which refers to the filtering of information. Meaning that the individual avoids all challenging or contradictory information.

- Selective perception occurs when the individual observes or is exposed to information that conflicts with their standing beliefs, yet somehow tries to manipulate the information to affirm their existing views.

- Selective retention is a major principle in marketing and attests that individuals are more likely to remember information that has been presented to them if it is consistent with what they already know to be true.3

Our brains use shortcuts

Heuristics are the mental shortcuts that we use for efficient, though sometimes inaccurate, decision-making. Though it is debated whether or not confirmation bias can be categorized as a heuristic, it is certainly a cognitive strategy. Specifically, it helps us to avoid cognitive dissonance by searching and attending to information that we already believe.

It makes sense that we do this. Oftentimes, humans need to make sense of information quickly however, forming new explanations or beliefs takes time and effort. We have adapted to take the path of least resistance, sometimes out of necessity.

Imagine our ancestors hunting. An intimidating animal is charging toward them, and they only have a few seconds to decide whether to hold their ground or run. There is no time to consider all the different variables involved in a fully informed decision. Past experience and instinct might cause them to look at the size of the animal and run. However, the presence of other hunters now tilts the chances of successful conflict in their favor. Evolutionary psychologists believe that the modern use of mental shortcuts for in-the-moment decision-making is based on past survival instincts.1

It makes us feel good about ourselves

No one likes to be proven wrong, and when information is presented that violates our beliefs, it is only natural to push back. Deeply held views often form our identities, so disproving them can be uncomfortable. We might even believe that being wrong suggests that we lack intelligence. As a result, we often look for information that supports rather than refutes our existing beliefs.

We can also see the effects of confirmation bias in group settings. Clinical psychologist Jennifer Lerner in collaboration with political psychologist Phillip Tetlock proposed that through our interactions with others, we update our beliefs to conform to the group norm. The psychologists distinguished between confirmatory thought, which seeks to rationalize a certain belief, and exploratory thought, which takes into consideration many viewpoints before deciding where you stand.

Confirmatory thought in interpersonal settings can produce groupthink, in which the desire for conformity results in dysfunctional decision-making. So, while confirmation bias is often an individual phenomenon, it can also take place in groups of people.

Why it is important

As mentioned above, confirmation bias can be expressed individually or in a group context. Both can be problematic and deserve careful attention.

At the individual level, confirmation bias affects our decision-making. Our choices cannot be fully informed if we are only focusing on evidence that confirms our assumptions. Confirmation bias causes us to overlook pivotal information both in our careers and in everyday life. A poorly informed decision is likely to produce suboptimal results because not all of the potential alternatives have been explored.

A voter might stand by a candidate while dismissing emerging facts about the candidate’s poor behavior. A business executive might fail to investigate a new opportunity because of a negative experience with similar ideas in the past. An individual who sustains this sort of thinking may be labeled “close-minded.” Confirmation bias can cause us to miss out on opportunities and make less informed choices, it is important to approach situations and the decisions they call for with an open mind.

At a group level, it can produce and sustain the groupthink phenomenon. In a culture of groupthink, decision-making can be hindered by the assumption that harmony and group coherence are the values most crucial to success. This reduces the likelihood of disagreement within the group.

Imagine if an employee at a technology firm did not disclose a revolutionary discovery she made for fear of reorienting the firm’s direction. Likewise, this bias can prevent people from becoming informed on differing views, and by extension, engaging in the constructive discussion that many democracies are built on.

How to avoid it

Confirmation bias is likely to occur when we are gathering information for decision-making. It occurs subconsciously, meaning that we are unaware of its influence on our decision-making.

As such, the first step to avoiding confirmation bias is being aware that it is a problem. By understanding its effect and how it works, we are more likely to identify it in our decision-making. Psychology professor and author Robert Cialdini suggests two approaches to recognizing when these biases are influencing our decision-making:

First, listen to your gut feeling. We often have a physical reaction to uncomfortable stimuli , like when a salesperson is pushing us too far. Even if we have complied with similar requests in the past, we should not use that precedent as a reference point. Recall past actions and ask yourself: “Knowing what I know now, if I could go back in time, would I make the same commitment?”

Second, because the bias is most likely to occur early in the decision-making process, we should focus on starting with a neutral fact base. This can be achieved by diversifying where we get our information from, and having multiple sources. Though it is difficult to find objective reporting, reaching for reputable, neutral outlets can allow us to have more agency in our beliefs.

Third, when hypotheses are being drawn from assembled data, decision-makers should also consider having interpersonal discussions that explicitly aim at identifying individual cognitive bias in the hypothesis selection and evaluation. Engaging in debate is a productive way to challenge our views and expose ourselves to information we may have otherwise avoided.

While it is likely impossible to eliminate confirmation bias completely, these measures may help manage cognitive bias and make better decisions in light of it.

How it all started

Confirmation bias was known to the ancient Greeks. It was described by the classical historian Thucydides, in his text The History of the Peloponnesian. He wrote: “It is a habit of mankind to entrust to careless hope what they long for and to use sovereign reason to thrust aside what they do not want.’’4

In the 1960s, Peter Wason first described this phenomenon as confirmation bias. In what’s known as Wason’s SelectionTest, he conducted an experiment in which participants were presented with four cards. The cards were either red or brown and featured a number on the opposite side, two even, and two odd cards. For example, two cards would read the numbers 3 and 8, while the other two would be face down, showing the color, one red and one brown. Participants were told if the number on the card was even, the opposite side would be red. They were then tasked with trying to figure out whether this rule was true by flipping over two cards of their choosing.

Many of the participants chose to turn over the card with the number 8 as well as the red card, as this was consistent with the rule they were given. In reality, this does little to actually test the rule. Indeed, turning over the “8” card will confirm what the experimenter said, but one also needs to turn over the brown card to verify that it is an odd number.

This experiment demonstrates confirmation bias in action, we seek to confirm what we know to be true, while disregarding information that could potentially violate that.5

Example 1 – Blindness to our own faults

A major study carried out by researchers at Stanford University in 1979 explored the psychological dynamics of confirmation bias. The study was composed of undergraduate students who held opposing viewpoints on the topic of capital punishment. Unbeknownst to them, the participants were asked to evaluate two fictitious studies on the topic.

One of the false studies provided data in support of the argument that capital punishment deters crime, while the alternative, opposing view (that capital punishment had no appreciable effect on overall criminality in the population).

While both studies were entirely fabricated by the Stanford researchers, they were designed to present “equally compelling” objective statistics. The researchers discovered that responses to the studies were heavily influenced by participants’ pre-existing opinions:

- The participants who initially supported the deterrence argument in favor of capital punishment considered the anti-deterrence data unconvincing and thought the data in support of their position was credible;

- Participants who held the opposing view at the beginning of the study reported the same but in support of their stance against capital punishment.

So, after being confronted both with evidence that supported capital punishment and evidence that refuted it, both groups reported feeling more committed to their original stance. The net effect of having their position challenged was a re-entrenchment of their existing beliefs.6

Example 2 – Effects of the internet

The “filter bubble effect” is an example of technology amplifying and facilitating our cognitive tendency toward confirmation bias. The term was coined by internet activist Eli Pariser to describe the intellectual isolation that can occur when websites use algorithms to predict and present information a user would want to see.7

This means that as we use particular websites and content networks, the more likely we are to encounter content that we prefer. At the same time, algorithms will exclude content that runs contrary to our preferences. We normally prefer content that confirms our beliefs because it requires less critical reflection. So, filter bubbles might favor information that confirms your existing options and exclude disconfirming evidence from your online experience.

In his seminal book, “The Filter Bubble: What the Internet Is Hiding from You", Pariser uses the example of internet searches for an oil spill to show the filter bubble effect:

"In the spring of 2010, while the remains of the Deepwater Horizon oil rig were spewing crude oil into the Gulf of Mexico, I asked two friends to search for the term ‘BP’. They’re pretty similar — educated, white, left-leaning women who live in the Northeast. But the results they saw were quite different. One of my friends saw investment information about BP. The other saw the news. For one, the first page results contained links about the oil spill; for the other, there was nothing about it except for a promotional ad from BP."7

If this were the only source of information that these women were exposed to, surely they would have formed very different conceptions of the BP oil spill. The internet search engine showed information tailored to the beliefs their past searches showed and picked results predicted to fit with their reaction to the oil spill. Unbeknownst to them, it facilitated confirmation bias.

While the implications of this particular filter bubble may have been harmless, filter bubbles on social media platforms have been shown to influence elections by tailoring the content of campaign messages and political news to different subsets of voters. This could have a fragmenting effect that inhibits constructive democratic discussion, as different voter demographics become increasingly entrenched in their political views as a result of a curated stream of evidence that supports them.

Summary

What it is

Confirmation bias describes our underlying tendency to notice, focus on, and give greater credence to evidence that fits with our existing beliefs.

Why it happens

Confirmation bias is a cognitive shortcut we use when gathering and interpreting information. Evaluating evidence takes time and energy, and so our brain looks for shortcuts to make the process more efficient. We look for evidence that best supports what we know to be true because the most readily available hypotheses are the ones we already have. Another reason why we sometimes show confirmation bias is that it protects our self-esteem. No one likes feeling bad about themselves-- and realizing that a belief they valued is false can have this effect. As a result, we often look for information that supports rather than disproves our existing beliefs.

Example #1 - Blindness to our own faults

A 1979 study by researchers at Stanford found that after being confronted with equally compelling evidence in support of capital punishment and evidence that refuted it, subjects reported feeling more committed to their original stance on the issue. The net effect of having their position challenged was a re-entrenchment of their existing beliefs.

Example #2 - Establishing personalized networks online

Modern preference algorithms have a “filter bubble effect,” which is an example of technology amplifying and facilitating our tendency toward confirmation bias. Websites use algorithms to predict the information and content that a user wants to see. We normally prefer media that confirms our beliefs because it requires less critical reflection. So, filter bubbles might exclude information that clashes with your existing opinions as informed by your online activity.

How to avoid it

Confirmation bias is likely to occur when we are gathering the information needed to make decisions. It is also subconscious; we are unaware of its influence on our decision-making. As such, the first step to avoiding confirmation bias is making ourselves aware of it. Because confirmation bias is most likely to occur early in the decision-making process, we should focus on starting with a neutral fact base. This can be achieved by having multiple objective sources of information.

Related TDL articles

Can Overcoming Implicit Gender Bias Boost a Company’s Bottom Line?

This article argues that gender diversity in a firm is associated with higher firm performance. By addressing and drawing on confirmation bias (among other relevant psychological principles), firms may be able to increase diversity and thereby increase performance.

Learning Within Limits: How Curated Content Affects Education

This article argues that the use of ‘trigger warnings’, modern preferences algorithms, and other such cues create a highly curated stream of information that facilitates cognitive biases such as confirmation bias. The author notes that this can prevent us from empathizing with others and consolidating our opinions in light of differing ones.