The Human Error Behind Fake News with David Rand

Once you go down a rabbit hole of bad information, it’s hard to get out. Not because you’re motivated, necessarily, but because it’s really corrupted your basic beliefs about the world.

Intro

In this episode of the podcast, Brooke is joined by David Rand, professor of Management Science and Brain and Cognitive Sciences at MIT. Together, the two explore David’s research on misinformation, trying to understand why people believe fake news, why it is spread in the first place, and what people can do about it. Brooke and David also discuss real-life applications of strategies to prevent misinformation, especially as it pertains to social media platforms like Twitter, Facebook, and news outlets.

Specific topics include:

- The categories of fake news, including blatant falsehoods, hyperpartisan news, and health misinformation

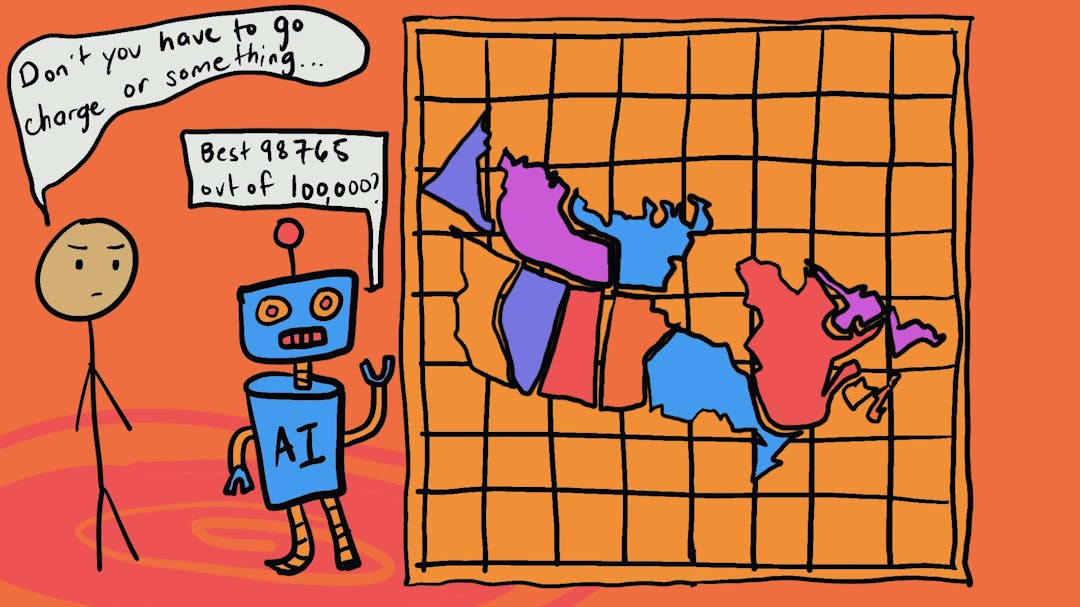

- The roles that bots, algorithms, and humans play in the dissemination of fake news

- How algorithms fail to analyze why people pay attention to certain information

- The tension between our preferences and our limited cognitive abilities

- How our beliefs can be tied to our social identities

- How media platforms can do create healthier ecosystems for information processing

- Platforms’ imperative to be proactive, rather than playing catch up with misinformation

- And does controlling the spread of misinformation infringe on the freedom of speech?